Responsible Innovation in Credit: Why Ethical AI Creates Competitive Advantage

The perceived conflict between profit and ethics in AI credit isn’t a law of nature; it’s an incentive problem. And incentive problems can be designed away. At Riverty, we believe ethical credit decisioning isn’t a constraint on growth – it's the foundation of sustainable performance and scalable responsible credit. By embedding fairness, transparency, and accountability into how credit markets operate through responsible AI in financial services, we can align regulatory readiness, consumer trust, and long-term profitability – at scale.

This requires reframing ethics from moral restraint to system architecture. Responsible innovation in credit means treating governance, data sovereignty in financial services, and auditability not as compliance burdens, but as core capabilities that enable competitive advantage.

Why the Profit-Ethics Dilemma Feels Real

Public debate about AI credit often compresses complex tensions into one simple storyline: lenders optimize for profit by extracting maximum data and personalizing aggressively, while ethical behavior demands restraint, transparency, and due process. The perceived trade-off suggests that what’s good for business is bad for borrowers, and vice versa.

From the borrower’s side, this narrative feels plausible. Hyperindividualized credit can feel like surveillance: data collected without clear consent, decisions made without explanation, prices tailored to moments of vulnerability. When credit systems optimize against people rather than for them, the relationship shifts from partnership to exploitation.

From the lender’s side, the tension also feels real. Ethical safeguards – bias testing, explainability requirements, conservative feature policies, robust recourse mechanisms – can appear as costs, compliance burdens, and competitive disadvantages. A bank investing heavily in fairness may carry higher short-term expenses than a competitor pushing automation and expansive data use more aggressively.

But this framing misses something critical: the dilemma is structural, not inevitable. It persists because market conditions make ethical behavior individually costly and collectively fragile. What’s needed isn’t choosing between profit and ethics, but changing the conditions so responsible innovation becomes cheaper, more scalable, and competitively neutral.

Reframing Ethics as System Design

The most important shift is moving from ethics as moral restraint to ethics as market infrastructure. Consider three design levers that change the incentive landscape: data sovereignty, interoperability, and process governance. These design choices transform ethical AI in financial services from aspirational principle to operational architecture.

Data sovereignty in financial services means borrowers retain meaningful control over how their data is accessed, shared, and used for credit decisions. This isn’t just privacy – it’s a mechanism that turns data from a surveillance tool into a negotiable resource. When borrowers actively choose which verified data to share for specific loan decisions, underwriting becomes collaboration rather than opaque extraction.

India’s Account Aggregator framework demonstrates this at scale: regulated entities enable secure, customer-authorized data sharing for financial services. The design lesson is transferable; when consent and purpose limitations are built into infrastructure, lenders access higher-quality, borrower-authorized signals without relying on shadow data practices that erode trust.

Interoperable financial data is the economic enabler of scalable responsible credit. When every lender needs bespoke integrations and every borrower needs to repeatedly upload documents, the market drifts toward lock-in and data monopolies. But when data access is standardized – through shared APIs, consistent schemas, and portable permissions – ethical constraints become affordable rather than prohibitive.

The EU’s push toward open finance through frameworks like FiDA (Framework for Financial Data Access) illustrates this logic. By establishing rights and obligations for data sharing across financial services, interoperability reduces coordination failures, lowers integration costs, and enables responsible practices to scale across the market.

Model governance in credit scoring embeds accountability into the end-to-end credit lifecycle. This means governed AI credit models that produce not just scores, but stable reason codes that can be communicated in plain language and audited internally. It means explainable adverse action that tells applicants what the model saw and what they can do to challenge it. It means auditability and change control that prevent silent drift into proxy discrimination.

The UK’s Prudential Regulation Authority sets out supervisory expectations for model risk management precisely because disciplined governance reduces operational risk, prevents uncontrolled feature creep, and forces institutions to document what models are for, what they’re not for, and when they should be overridden. This isn’t compliance theater – it’s profitability protection through responsible use of data in credit.

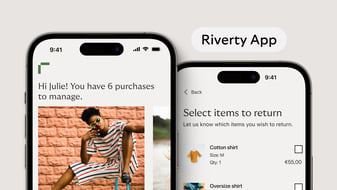

At Riverty, we’re building this governance into our credit decisioning infrastructure, treating model risk management, auditability, and change control as core operational capabilities, not compliance add-ons.

How These Levers Dissolve the Trade-Off

When data sovereignty, interoperability, and process governance work together, they change the economics of ethical behavior. Sovereignty without interoperability creates friction. Interoperability without sovereignty enables surveillance. Both without process design become unaccountable automation. But when all three are in place, they enable a credit market where inclusion can be pursued without sacrificing agency, and profitability can be pursued without relying on opaque discrimination.

Lenders gain better verified signals, lower operational uncertainty, and reduced compliance and reputational risk. Borrowers gain control, transparency, and meaningful recourse. Regulators gain visibility into how systems operate, making markets more stable and supervision more effective.

Crucially, these levers make ethical conduct competitively viable. When interoperability and explainability are standardized across the market through regulation, responsible practices don’t remain discretionary costs – they become baseline expectations all players must meet. Trust, transparency, and fair process design become dimensions of competition rather than competitive disadvantages.

Lenders differentiate themselves by offering clearer explanations, more predictable product behavior, and more robust recourse mechanisms. This reduces customer acquisition costs, increases long-term loyalty, and builds reputational capital that protects against regulatory scrutiny and public backlash.

Why Regulation Enables Rather Than Constrains

The role of regulation isn’t to ban innovation; it’s to create the level playing field that makes responsible innovation scalable and competitively neutral. Regulation as innovation enabler means establishing baseline requirements that prevent a race to the bottom while channeling competition toward quality, transparency, and customer outcomes.

The EU’s emerging regulatory framework illustrates this. The AI Act classifies credit scoring systems as high-risk, triggering concrete obligations around risk management, data governance, transparency, and human oversight. The revised Consumer Credit Directive strengthens expectations around creditworthiness assessment and explicitly addresses automated processing. The Digital Operational Resilience Act sets baselines for ICT risk management when credit decisions rely on outsourced services and external data pipelines.

These aren’t obstacles to AI credit – they’re the infrastructure that makes it trustworthy. By mandating verifiable governance, controllable data practices, and robust operational processes, regulation ensures all actors operate under comparable constraints. This prevents a race to the bottom where ethical restraint gets punished and irresponsible automation gets rewarded.

The same logic applies to interoperability frameworks. PSD2 created regulated access to payment account data, enabling cash-flow-based underwriting without requiring lenders to rely on unstructured or intrusive alternative data. Open finance initiatives extend this model across financial services, reducing lock-in and enabling competition on service quality rather than proprietary data hoarding.

When these regulatory building blocks are in place, sustainable credit innovation becomes the path of least resistance rather than the path of most friction.

Long-Term Resilience Through Responsible Design

Ethical AI in credit isn’t a short-term cost – it’s a long-term investment in market stability, customer trust, and regulatory legitimacy. Markets that optimize purely for speed and margin without accounting for fairness create systemic risks: over-indebtedness, exclusion, reputational scandals, regulatory crackdowns, and erosion of public trust in financial institutions. These risks don’t show up in quarterly earnings, but they compound over time, and when they materialize, they can destabilize entire business models.

Responsible credit systems are built for durability. They reduce dispute volume, lower compliance costs, improve customer retention, and create reputational capital that protects against competitive and regulatory pressure. They’re also more adaptable: when regulations tighten or public expectations shift, systems designed with fairness and transparency from the start can adjust without requiring fundamental rebuilds.

At Riverty, we approach hyperindividualized lending with this principle: the perceived trade-off between profit and ethical behavior isn’t economic inevitability – it’s a governance deficit. When data sovereignty in financial services, interoperability, and process design are treated as infrastructure rather than optional add-ons, responsible innovation becomes both feasible and profitable.

Ethical credit is market infrastructure. And infrastructure, when built well, enables growth rather than constraining it.

Frequently Asked Questions

Responsible innovation means building AI credit systems where ethical design choices – fairness testing, explainable decisions, data sovereignty, and robust governance – are embedded from the start. It’s about making deliberate choices regarding which data sources are permitted, how bias is tested, where human oversight remains essential, and how borrowers can contest outcomes. This treats ethics as system architecture rather than moral restraint.

The perceived conflict emerges from market conditions that make responsible behavior individually costly when competitors can cut corners. But when data sovereignty, interoperability, and process governance are standardized through regulation, ethical conduct becomes competitively neutral. Responsible systems reduce operational risks – fewer disputes, lower compliance costs, stronger customer retention – that protect long-term profitability. Additionally, transparency and fairness become competitive differentiators that reduce customer acquisition costs and build reputational capital.

Data sovereignty ensures borrowers retain control over which data is shared, for what purpose, and for how long, transforming data from surveillance tools into negotiable resources. Interoperability makes this practical by standardizing data access through shared APIs and portable permissions, reducing integration costs and preventing lock-in. Together, they enable lenders to access higher-quality, borrower-authorized signals while borrowers maintain agency, can switch providers, and can challenge decisions.

Regulation creates the level playing field that makes responsible practices competitively neutral and scalable. Frameworks like the EU AI Act, Consumer Credit Directive, and FiDA establish baseline requirements for fairness, explainability, and governance that all participants must meet, preventing a race to the bottom. This doesn’t constrain innovation; it channels it toward sustainable outcomes by making governed AI credit models standard infrastructure rather than optional add-ons, enabling competition on service quality while protecting borrowers and market stability.