Explainable Credit Decisions: Transparency That Builds Trust

Speed and automation only create value when customers can clearly understand outcomes and act on them. AI-driven credit decisioning promises efficiency, but when decisions lack transparency, trust erodes. Ethical AI in financial services must deliver not just accurate risk assessments, but explainable credit decisions that borrowers can understand and challenge. This isn’t just regulatory compliance; it’s the foundation of fair access to credit, operational resilience, and sustainable customer relationships in responsible AI in credit scoring.

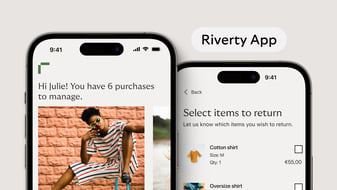

At Riverty, we believe credit decisions must be understandable, contestable, and built for accountability. Because in AI fairness in lending, transparency isn’t optional – it’s what makes automation trustworthy.

Why Black-Box Decisions Undermine Trust

Hyperindividualized credit relies on complex models that process diverse data sources – transaction patterns, behavioral signals, alternative credit indicators – to assess risk and set terms. These models can be more accurate than traditional approaches, especially for thin-file consumers seeking fair access to credit. But accuracy without explainability creates a legitimacy problem.

When someone receives a generic response like “Our assessment indicates elevated risk,” they’re left with no meaningful path forward. They don’t know which factors drove the decision, whether the data was accurate, or what they could change. The decision might be statistically sound, but it feels opaque and unchallengeable.

This matters because credit decisions deeply affect people’s lives; access to housing, transportation, education, entrepreneurship, and resilience against financial shocks. When decisions happen inside algorithmic systems borrowers cannot see into or contest, the relationship shifts from partnership to power imbalance. Inclusive credit scoring requires not just better data, but meaningful transparency that enables people to act on outcomes.

Research on public attitudes toward algorithmic decision-making shows people are more skeptical of AI tools in high-stakes contexts when they anticipate unfair treatment or inability to challenge outcomes. Better predictive performance alone doesn’t resolve legitimacy concerns when decisions feel arbitrary or recourse feels impossible.

Where Explainability Breaks Down

The challenge isn’t just that AI models are technically complex – it’s that explanations often fail to translate model outputs into information customers can actually use.

A model might generate a risk score based on dozens of weighted variables, each contributing fractionally to the final decision. But telling someone “Your score decreased because of a combination of 47 factors weighted according to statistical importance” is noise, not an explanation.

Even when lenders provide reason codes, they often stay at category level: “insufficient credit history,” “high debt-to-income ratio,” “recent credit inquiries.” These phrases describe what the model saw but don’t help borrowers understand what happened or what they can do about it.

Worse, when decisions depend on alternative data or inferred attributes, borrowers may not recognize the signals being used. Someone declined for credit might later discover that shopping behavior, device metadata, or geolocation patterns influenced the outcome – data they never consciously provided as credit information and signals they have no clear way to correct.

This creates practical impossibility of recourse. Credit reporting systems already show how damaging errors can be and how difficult disputes are to navigate. Hyperindividualization multiplies failure points: more data sources, more derived attributes, more model updates, more automated limit changes. When something goes wrong, borrowers face a diagnostic challenge that can feel insurmountable.

Why Contestability Matters as Much as Explainability

Transparency without recourse is performative. Customers need not just to understand decisions, but to challenge them when data is wrong, when circumstances have changed, or when automated systems produce outcomes that don’t reflect reality.

This requires building correction mechanisms directly into the credit lifecycle. When someone disputes a decision, they shouldn’t face a wall of automated responses. They should be able to submit updated information, request human review for edge cases, and receive timely resolution that doesn’t derail their financial plans.

Contestability also protects lenders. When customers can challenge outcomes, errors surface earlier. Disputes get resolved before they escalate into regulatory complaints or litigation. And when recourse mechanisms work well, they generate signals that help lenders improve models and identify edge cases where automation fails, strengthening AI fairness in lending across the system.

The revised EU Consumer Credit Directive explicitly recognizes this. It requires that when automated processing is used, consumers have rights to human intervention, an explanation of the assessment including logic and risks, the ability to express a viewpoint, and the right to request review. Rejected applicants must be informed without delay and, where relevant, referred to accessible support services.

These aren’t bureaucratic requirements – they’re structural safeguards that make AI credit systems governable when they work and recoverable when they fail.

Building Explainability Into the Process

The most effective approach to transparency is designing for it from the start rather than retrofitting explanations after models are deployed. This means establishing clear policies about which data can be used and why. It means documenting model objectives, limitations, and edge cases where human oversight in AI systems is required. It means creating audit trails that show which information drove which decision, so disputes can be reconstructed and evaluated.

It also means testing explanations with actual customers, not just compliance teams. An explanation that satisfies regulators but confuses borrowers hasn't achieved its purpose. Language needs to be plain, specific, and grounded in actions customers can take.

From an operational perspective, explainability becomes a profitability enabler when embedded in the process. Clearer explanations reduce call center volume. Faster dispute resolution lowers escalation costs. Better transparency builds customer confidence, increasing loyalty and reducing churn. When models are designed with explanation requirements in mind, they tend to be more robust, auditable, and easier to monitor for drift or bias in credit scoring.

At Riverty, we’re building explainability into our credit decisioning approach – not as a compliance add-on, but as core infrastructure that makes fair credit decisions scalable, auditable, and trustworthy.

Trust as Infrastructure

Hyperindividualized lending will only remain viable if customers trust the system is fundamentally fair, that decisions make sense, and that they have meaningful agency when things go wrong.

Explainability and contestability make trust durable. They turn credit relationships from opaque, take-it-or-leave-it transactions into partnerships where both sides have responsibilities and recourse. They enable inclusive credit scoring that doesn’t just widen access, but does so in ways people can understand and act on.

At Riverty, our approach to ethical AI in financial services starts with this principle: speed and personalization are valuable, but only when paired with transparency customers can understand and challenge mechanisms they can actually use. Because when credit decisions feel arbitrary, trust erodes – and without trust, even the most accurate models become liabilities rather than assets.

Frequently Asked Questions

Lenders using AI-driven systems should provide specific, actionable explanations that identify which factors led to the decision. Rather than generic responses like “elevated risk,” you should receive clear information about what the model considered, such as high credit utilization, insufficient income relative to expenses, or recent credit inquiries. You have the right to ask which data was used, whether it’s accurate, and how to correct errors or provide updated information that might change the outcome.

Yes. Under frameworks like the EU Consumer Credit Directive, you have rights to human intervention, an explanation of how the decision was made, the ability to express your viewpoint, and the right to request a review. If you believe data is inaccurate or circumstances weren't properly considered, you can submit corrections, provide additional documentation, or request manual review by credit professionals who can account for context the automated system might have missed.

Lenders may use traditional credit bureau data, verified income and transaction history, employment stability indicators, and alternative signals like cash-flow patterns or payment behavior. However, ethical AI in financial services requires that signals are causally relevant to repayment ability – not opaque proxies like purchasing patterns, device metadata, or social network activity. You should be able to understand what data influenced your decision and challenge information that’s inaccurate or irrelevant.

Fair decisions are explainable, contestable, and based on relevant signals rather than discriminatory proxies. If you receive a decision you don’t understand, ask for specific reasons. If the explanation references factors that seem unrelated to your ability to repay – or if you suspect the decision relied on characteristics like where you live, what you buy, or your demographic profile – you can challenge it and request human review to ensure fairness.

Contact the lender immediately to dispute the decision and identify the specific data you believe is incorrect. Provide documentation that supports your position: updated income statements, corrected account information, or evidence that circumstances have changed. Request a manual review if the automated system doesn’t account for your situation. Keep records of all communications and timelines, and if the lender doesn’t resolve the issue, you can escalate to regulatory authorities or consumer protection services.